The day local AI finally felt ready

I'm building a game with zero cloud API calls

Eight months ago, I bought an RTX 3090 with a simple goal: run my own AI locally. No cloud providers. No privacy trade-offs. Just me, my hardware, and open-weight models.

It wasn't the first time I tried to get along with typical cloud providers and host my own services. I always thought the extreme SaaS and cloud trends were not especially good for users, especially regarding privacy. So I always tried to deploy open-source alternatives to typical cloud-based services for my friends, family, and others.

Not to mention the typical “enshittification“ process that most of these subscription models lead to. In the case of LLM providers, it is extremely pronounced. You start paying X for Y service and then, you get either “nerfed“ models or less usage for the same price you start paying for. I strongly believe this should be regulated at some point.

When I tried the first open-weights models running on CPU and modest GPUs, it was a “wow“ moment. I thought local-first agentic coding was close. But I was wrong; by then, models were not trained well enough to call tools consistently on customer-grade hardware, reserving that job for huge models provided by OpenAI, Anthropic, etc. So I kept playing with every new open-weight model, frustrated that it could not work in agentic mode. In the meantime, I obviously kept working (and paying) for commercial models as a service. My hope nearly disappeared, given the memory crisis and seeing that all new Nvidia boards were super-expensive and accessible only to professional use. Upgrading hardware to run local models would not be an option for me. I thought I would never run my own agents locally. I gained some hope when I tried GLM 4.7 Flash, but it was still unreliable for serious work.

Then, less than two months ago, Qwen 3.5 appeared. This model changed everything. On its smaller sizes (8b, 35b-A3b, 27b) it consistently calls tools. It does a great job on my limited hardware (24G VRAM). It was a "FINALLY!!! 🎉" moment. That’s not the end! There is research from Google called TurboQuant that seems it will drastically reduce memory usage of models by compressing their KV without losing quality. That would mean being able to run bigger models on the same hardware.

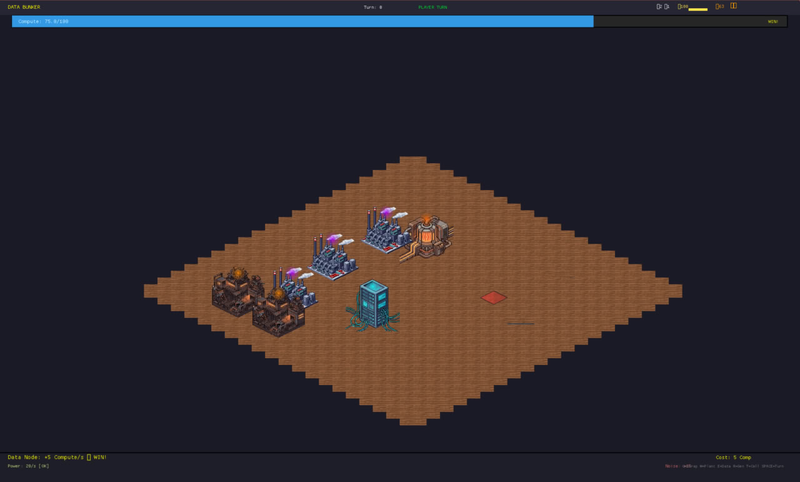

So I decided to create a game using totally extraneous technology for me (Rust + WASM) with a very simple constraint: I will create it using exclusively local models. No LLM cloud provider can be used in the coding process (only for asset generation will I delegate it to the amazing retrodiffusion.ai service).

The game has no name yet, but it has a plot. In a post-apocalyptic world, humanity has taken refuge from AI in vault bunkers. To fight back the surface AI-controlled world, you need to build a competent guerrilla data center without being noticed by sentinels. You win if you accumulate enough compute power without being noticed. Once I have something more fun to play with, I will publish it so everyone can play it :)

Stay tunned!

Comments

No comments yet. Be the first to comment!